Well we already know that March was really black for Microsoft updates. I just want to mention few of them:

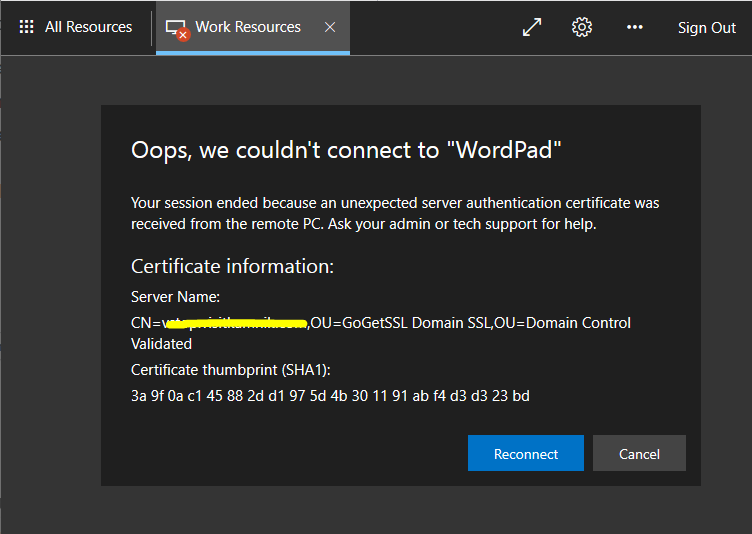

- Exchange had remote code execution and a lot of organizations were hacked. Patch is already available. Look here for more info. Microsoft timeline is interesting on this exploit and is publicly available here or maybe take a look of the date of this post.

- Windows DNS servers had also remote code execution vulnerability and also here are patches already available

- Windows 10 had blue screen when trying to print on some printers

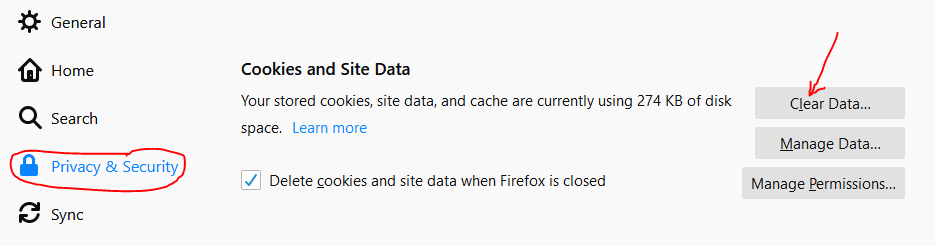

The latest one was les critical for system administrators, but when you ask helpdesk, they had a lot of work and they uninstall the latest update KB5000802. Anyway, this is a security update and it should not to be best practice to uninstall the latest update (but there is no other way). At the same time, if you want not to have the same problem the day after, you had to defer windows update in time – what is not a recommended way. From today, there is a patch also for the latest mistake. It is available on this link. Please install it and turn your updating process back on.